1. The War for Seconds: Why Latency Became the Key Metric in Video Surveillance

Ten years ago, video surveillance was measured by different standards. Resolution, field of view, frame rate, archive depth. Latency barely concerned anyone, because users were either watching recorded footage or a local live stream within a single network. Camera, recorder, monitor — everything lived inside one building, one switch, one physical reality. The internet in this setup was secondary, if present at all.

But once video surveillance moved to the cloud, the rules changed. Cameras went online, users moved to mobile devices, and networks became unpredictable. LTE, 5G, public Wi-Fi, corporate proxies, carrier-grade NAT — all of this turned live video delivery into a problem where every second of delay became critical. When a security guard or business owner opens a camera in a mobile app, they expect to see what is happening now, not what happened ten seconds ago. At that moment, video surveillance stops being “recording” and becomes an interactive interface to the real world.

This is where classic protocols began to crack. RTSP, designed for local networks, turned out to be poorly suited for the internet and NAT. HLS, perfect for large-scale video streaming, proved too slow for live scenarios. RTMP, long considered the low-latency standard, died along with Flash and failed to integrate into modern web architecture. The industry found itself searching for a new balance between speed, resilience, and control.

SRT did not appear as a revolution, but as an answer to a very specific engineering demand. It was not designed for video surveillance, yet surveillance turned out to be one of the fields where SRT’s properties matched real-world needs almost perfectly. Low latency, UDP-based transport, resilience to packet loss, built-in encryption, and no hard dependency on the browser — all of this made SRT a natural choice for mobile applications and operator interfaces. To understand why, we first need to understand what SRT actually is.

2. SRT Without Myths: UDP, Reliability, and Time Control

Secure Reliable Transport is often described as “UDP with a brain,” and there is some truth to that. At its core, SRT is built on UDP — a protocol that does not guarantee delivery, order, or integrity of packets, but provides minimal latency and maximum throughput. That’s why UDP has been used for decades in real-time systems, from VoIP to video conferencing. However, raw UDP is too fragile for the internet, where packet loss and jitter are normal.

SRT solves this problem differently from TCP. It does not try to deliver every byte at any cost. Instead, SRT introduces the concept of a time window. The protocol knows how much time it is allowed to spend recovering a lost packet. If the packet is not delivered and acknowledged within the configured latency, it is considered lost forever. The video continues. As a result, the system does not “freeze” or accumulate delay, as TCP connections do under poor network conditions.

This fundamental difference is the key to understanding why SRT works so well in video surveillance. Video is a stream where continuity matters more than absolute accuracy. Losing a few packets may cause artifacts, but losing time destroys the very idea of live monitoring. SRT allows the developer to manually define this balance: increase latency to improve resilience, or reduce it to achieve minimal delay. This control is especially important in mobile networks, where channel quality can change literally every second.

In addition, SRT includes encryption from the start. This is not an add-on, not optional TLS layered on top of something else, but part of the protocol itself. For video surveillance, where video is almost always personal data, this is critical. In a world where cameras watch streets, offices, entrances, and private homes, sending video in clear text is simply unacceptable. SRT solves this without the need to build complex layers on top of RTSP or invent proprietary solutions.

It is also important to understand what SRT is not. It is not a codec, not a container, and not a player. SRT does not know what H.264 or H.265 is — it just moves bytes. In real surveillance systems, SRT is most often used to transport an MPEG-TS stream containing H.264 or H.265 video. This makes it compatible with the existing ecosystem of cameras and encoders, without requiring radical changes at the video source level.

But things get truly interesting when SRT meets the concept of P2P — a term that in video surveillance has long been more marketing than technology.

3. The P2P That Doesn’t Exist: How Cloud Cameras Really Connect

If you believe the brochures, most cloud cameras work on a P2P principle. The camera supposedly connects directly to the user’s phone, bypassing servers, clouds, and intermediaries. It sounds great — and has very little to do with reality. On the real internet, cameras almost always sit behind NAT, often behind multiple layers of NAT, while mobile devices live behind carrier-grade NATs. In such conditions, direct connections are possible only in a limited number of scenarios and with many caveats.

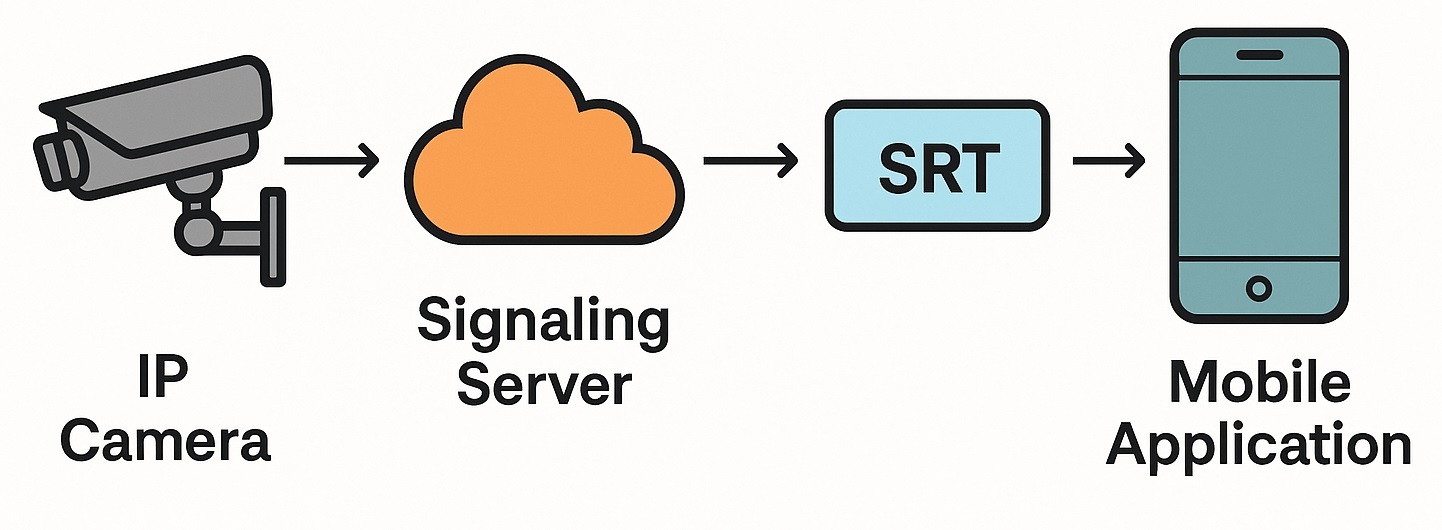

The real architecture of cloud video surveillance almost always includes a server. Sometimes it is used only for signaling and authentication; sometimes for full video relaying. In most cases, the system tries to establish a direct connection between the camera and the client, but at the first sign of trouble it falls back to a server relay. The user still sees a “P2P” interface, even though the video is actually going through the cloud.

SRT fits perfectly into this model. When used with an intermediary server, it does not require complex ICE logic like WebRTC. The camera or edge server publishes a stream via SRT, and clients connect in play mode. The connection initiator is almost always the client, which is critical for NAT traversal. The server acts as a listener, accepting incoming UDP connections. This is a simple and reliable scheme that scales well for scenarios where one camera has anywhere from one to ten viewers.

It is important to note that this approach is neither deception nor compromise. It is a conscious engineering choice. Fully serverless P2P scales poorly in video surveillance, is hard to debug, and unstable in mass-market deployments. Even a minimal server provides control, security, and centralized access management. In this architecture, SRT becomes the transport layer between server and client — not a magical way to bypass all network limitations.

This is also where it becomes clear why mobile applications outperform browsers in terms of latency. The difference lies not only in the protocol, but in the entire delivery model.

4. Why Mobile Apps Feel “More Live” Than the Browser: Architecture Matters

When a user opens a camera in a browser, they almost always interact with the HTML5 video element. This element supports a limited set of protocols and formats, the main one being HLS. HLS is a protocol designed for reliable video delivery over HTTP. It scales well, caches easily, and works beautifully with CDNs. But this universality comes at the cost of latency.

HLS splits video into segments that the client downloads over HTTP. The player keeps several segments buffered to smooth out network fluctuations. This means there is always a delay between real time and what the user sees. Even with aggressive tuning, it rarely drops below several seconds. For movies or broadcasts, that’s fine. For video surveillance, it’s critical.

Mobile applications are in a completely different position. They are not constrained by the browser stack and can use native video playback libraries. On Android, this is often libVLC or FFmpeg-based players that can work directly with UDP, SRT, and RTSP. These players allow developers to control buffering, define an exact latency window, and choose what to sacrifice — resilience or delay.

Mobile apps also have more direct access to the operating system’s network stack. They can adapt better to mobile network specifics, react faster to changes in channel quality, and use optimizations unavailable to browsers. Combined with SRT, this results in a noticeable latency advantage. In real surveillance systems, camera-to-smartphone delay with SRT often falls in the 1–2 second range. This is close to the practical limit without complex bidirectional protocols like WebRTC.

It’s important to understand that browsers are not “bad” or “slow.” They simply solve a different problem. The browser stack is optimized for security, compatibility, and scale. It is not designed for low-level network protocols. Mobile applications, on the other hand, can afford to be more specialized and aggressive. That is why the surveillance industry increasingly uses different protocols for different clients.

5. VSaaS Architecture: Two Protocols, One User Experience

Modern VSaaS platforms rarely bet on a single video delivery protocol. Instead, they build layered architectures where each client receives video in the format best suited to its capabilities and constraints. A typical architecture includes the camera, a cloud backend, a media layer, and client applications.

Cameras usually continue to stream via RTSP. It is a proven and widely supported protocol that works well inside local networks and between camera and server. The stream then reaches an edge or cloud server, which performs multiple functions: authentication, access control, connection accounting, and, if necessary, video relaying. This is where the protocol choice for the client happens.

For mobile applications, the server typically offers SRT or WebRTC. SRT is chosen where simplicity, predictability, and latency control matter most. The client connects via SRT, receives the stream with minimal buffering, and sees live video almost in real time. For browsers, the server offers HLS, sometimes in a low-latency configuration. This ensures compatibility with any device and allows the system to scale to thousands of users via CDNs.

From the user’s perspective, this looks like a single service. They open a camera in a mobile app or a browser and see video. Differences in protocols, latency, and buffering are hidden inside the architecture. This approach is considered mature and industrial today. It acknowledges platform limitations and uses their strengths instead of chasing a one-size-fits-all solution.

SRT occupies a clearly defined niche in this architecture. It does not replace HLS — it complements it. It does not try to be universal — it solves a specific problem: delivering live video with minimal latency to controlled clients. That is why SRT has taken root so well in mobile surveillance applications.

6. The Future of Live Video

SRT is often perceived as a temporary trend or a niche solution. But viewed in the context of surveillance evolution, it is a natural step. The industry has moved from local systems to cloud platforms, from monitors to mobile apps, from archives to real-time live interfaces. At each stage, video delivery requirements changed — and SRT turned out to be the tool that best matches today’s expectations.

This does not mean SRT will replace all other protocols. HLS will remain the backbone for browsers and mass access. WebRTC will be used where bidirectional communication and ultra-low latency are required at any cost. RTSP will continue to live inside cameras and local networks. But SRT has secured a stable position between these worlds, offering an optimal balance for mobile and operator scenarios.

The key lesson is that there is no single “correct” protocol in video surveillance anymore. There is architecture, where each protocol is used where it makes the most sense. SRT is not a magic wand and not “true P2P.” It is a reliable transport that, when integrated into a well-designed VSaaS architecture, brings live video as close to real time as is realistically possible in public networks.

That is why mobile apps will always feel more “live” than browsers, why P2P in cameras almost always implies the presence of the cloud, and why SRT today is no longer seen as an experiment, but as a working tool of the modern video surveillance industry.